(0:00) Intro Music

Mike Lieberman (00:06)

I think the biggest thing in security tooling is better user experience. I think that to me is one of the biggest challenges.

CRob (00:25)

Welcome, welcome, welcome to What’s in the SOSS?, the OpenSSF’s podcast where I talk to developers, maintainers, security experts, and people in and around this amazing open source ecosystem. Today, again, we have a real treat. Friend of the show, Mike Lieberman from Kusari is joining us again after – I don’t know if your podcast was toppled from its place of the most listened to before, but we’re gonna see if we can make another hit for us. But we’re here today to talk about some interesting developments that you and your crew are involved in and just things going on in open source security. So how have you been, sir?

Mike Lieberman (01:07)

Well, thank you for having me back and yeah, things are going pretty well.

CRob (01:12)

Well, let’s dive right into it. Recently, and this is a topic that I’m actually dealing with this very moment while we’re recording this podcast, that open source maintainers are just currently overwhelmed by just this deluge of noisy, low quality reports, a lot of them generated by AI tools. So kind of thinking about it with your, many hats you wear, as you know, business owner, a community member, and a long time developer, a security expert. From your perspectives, what is actually creating the most burden today? And think about it through the lens of this project you’re going to share with us in a moment.

Mike Lieberman (01:57)

Yeah, sure. So I think to kind of start, the problem has been the same problem since, know, throughout human history, it is a combination of either bad actors or just lazy people that are I would say the biggest issue here. Right. We have a lot of things like AI reports generating awful sort of vulnerability, know, fake vulnerabilities or whatnot. But if we kind of look at it through the lens of like history through tech, we saw the same thing with any sort of automation, right? When, yeah, exactly. When people could kind of create scripts, hey, let me go in spam this one project with my script. Let me spam a whole bunch of projects with my sort of automation or whatever. And, you know, the same thing sort of happened when people, when we started moving away from mailing lists to sort of GitHub and those sorts of things as well. So I think it’s really to kind of take a step back. It’s kind of how people are using the tools more so than the tools themselves. But I do think when it comes to a lot of the security reports, yeah, it is folks who are just kind of asking an LLM.

Hey, find me find me some zero day. And of course, that’s never going to work because the LLMs don’t have that information. And it’s just it kind of comes back to you need people who understand what they’re doing, using the tools in the right way in order to kind of figure out some of this stuff.

CRob (03:42)

Yeah, human in the loop. Our dear friend, Dr. David Wheeler has a saying, he says, a fool with a tool is still a fool. So again, having those experts in there, helping out is critical. So let’s…

Mike Lieberman (03:43)

Yeah.

CRob (04:00)

You’ve been in this space for a long time, focusing in on supply chain security, and you’ve written or contributed to a ton of tools. And most recently, you all helped create over at Kusari a tool called Inspector. So from just a high level TLDR, how do you see things like Inspector kind of changing this dynamic of getting more people involved or getting more expert knowledge in?

Mike Lieberman (04:26)

Sure. I think like the things. So actually to take a step back, right? There’s a lot of great tools that are being built. The challenge with those tools is, and the way I kind of think about it is like, you know, home security, right? It’s, hey, there’s a ton of tools out there that are helping out with open source security the same way that there’s a ton of tools out there for, you know, a smarter lock. A better security system.

CRob (04:59)

A doorbell that can find your dog.

Mike Lieberman (05:02)

There’s privacy concerns on that one. think, you know, we can all agree on that. But I think to that extent, when it comes to sort of these tools, it’s in how they’re used. And also, the expertise that’s required in how to use them. And also, when building the tools, what sort of expertise went into building the tools? And I think that to me is where the big gap is with just sort of some of the AI related things is you have folks using a very generic system like an LLM. And just saying, hey, LLM become a security expert and do this stuff. And of course the LLM makes a lot of mistakes and whatever. But if you were to kind of say through things like MCP and LLM skills and all these other things, if you have a way of codifying, run open SSF scorecard, run, you know, SLSA and run all of these various things and put all of this together and generate me an SBOM using these tools and whatnot. And then you can take all that, then hand the output to the LLM and say, hey, here’s everything I discovered. Here’s also the code. Help me make sense of it. And I think that to me is kind of where a lot of the benefit is. And again, what I just described is essentially inspector, right?

We’re running all of these various tools, again, that we understand because we’ve contributed to those tools, we’ve helped maintain some of those tools, we have been users of these tools for years. So we understand how they’re supposed to be used. We understand how a human who is, before the age of AI would be using these tools. And we recognize the burden of that expertise. And we’ve sort of encoded it. Had the LLM kind of come in at the last mile and then take all that information, and say, hey, if there is a finding, a vulnerability, great. Where does that vulnerability live? Is that a vulnerability in a core piece of my code, which yes, I need to address right now, or is it like, it’s in a test? Yes, it’s probably something I should fix, but maybe not the biggest issue right this second. And so I think tools like that are really helping because the thing that we found, and again, a user of inspector told us this, and I won’t call out the exact AI tool they were using, but they were using a generic LLM with some stuff. And then they were using inspector. And one of the things that they had said was, wow. Yeah. Like inspector is actually catching the issue with all of the, the, uh, it detected that a particular issue was essentially a, um, a false positive because it looked at a potential remote code execution and looked at all the stuff alongside the code and said, you are clearly have an allow list. So given that you have this allow list, we recognize it’s not a remote code execution, or rather arbitrary code sort of execution attack. And I think it’s stuff like that, that we’re seeing starting to get developed more and more. Whereas a lot of the tradition, I want to say traditional with AI, even though it’s been, you know, like in the past year, everything shifts. Yeah.

When we look at sort of how folks were using LLMs even just a year ago, a lot has shifted and we’re seeing less of these false positives coming out of AI because people are using AI the way it should be used, where it’s you’re supplementing all these other tools that are out.

CRob (08:40)

That’s awesome.

And this might lead into this next question. AI and automation are finding a lot more potential issues, but they’re not always better. And is that what you think that secret sauce of having that security expertise and that helps kind of balance out finding a vulnerability and then kind of sharing that information with the maintainer effectively?

Mike Lieberman (09:08)

Yeah, so I mean, I think when it comes to stuff like that, the way I, you know, I was actually having a conversation with a friend just a few days ago about this issue and I’m reminded of issues just even before AI. And one of the big things that maintainers would ask is, give me a way to reproduce this. If you’re not gonna give me a way to reproduce this, I’m not gonna, you know, I’m not gonna accept your report here and I’m not gonna do a ton of investigation to figure out what you intended to mean.

And I think it’s the same way with AI here, where we’re starting to see with some of the stuff coming out of AI XCC and some other places, we are starting to see tools that are being built that are actually generating the tests and whatnot that can reproduce these vulnerabilities that the LLMs are claiming, or AI tools are claiming. And I think that to me is important because when I look at Daniel from Curl or some of these other folks who are like,

I am so sick of all of these AI reports. It’s like every single one that they’re claiming is an AI report, it’s like they didn’t give me a way to reproduce it. Or even worse, the AI said, here’s a list of steps to reproduce. folks are coming out and saying, that function that you were claiming needs to get run doesn’t exist. And so I’m just thinking to myself, well, why not just write a test that does that thing, you know, and have the LLM write the test, whatever, but prove out that like, hey, an AI tool generated a test and I can run that test and I could see, yep, that is an exploit. That is actually a vulnerability. Now I can go and take that and package it up, it over to, you know, hand it over to the maintainer. And I think if I’m as a maintainer of various open source projects,

If I received something that said, hey, here is a test, you can run that test. And again, by the test, mean like an actual test, a test that makes up other code and tries to do whatever, but an actual test. If you have that, I as a maintainer would say, absolutely, that is a real vulnerability. But I think the thing that we’re seeing right now is we’re seeing all this sort of slop, which is.

Again, it’s just similar to the slop we saw years ago with other sort of automated vulnerability reporting and just generally in tech. And I think the problem here is still kind of comes back to lazy maintainer or sorry, not lazy maintainers, but lazy submitters and just other sort of bad actors who are just like, yeah, I’m just gonna throw a thing out there and hopefully one of these is right. And I’m gonna get it, make a name for myself.

CRob (11:58)

A wise man once said that knowing is half the battle.

Mike Lieberman (12:01)

Yes.

CRob (12:03)

And thinking about it from this maintainer developer perspective, almost always maintainers are volunteers first. They’re there because they have amazing idea they wanna share, they have a problem they’re trying to solve. Some people are paid to do a specific thing, but the majority of folks are volunteers first. And security experts 12th, 18th, security is not necessarily a core skill that most developers have. what design choices, kind of thinking about when you were looking at Inspector, what design choices did you make to help meet the maintainers where they are, where they are experts in languages or frameworks or kind of these techniques or algorithms? But how are you helping them where they are rather than expecting them to become a full-time securityologist like you or I?

Mike Lieberman (12:58)

So we have a mantra here at Kusari, which is essentially just don’t piss off the engineers, right? As engineers ourselves, as folks who, myself, I am a software engineer first, or really more of a dev ops, dev sec ops engineer first, became more of a software engineer over time. But one of the big sort of mantras was, one of the things that always frustrated me was you have to do all the security stuff.

And they were burdens to my daily job, right? Where I was not being, you know, again, this is me both as a maintainer of open source projects and also just, hey, I get paid as an engineer or whatever. What, but at the end of the day, I wasn’t incentivized to do secure things. I might’ve been yelled at. I might’ve been told thou shalt do this security thing, but my incentives were getting out this new feature, making my customer or my user happy, right?

And so when it comes to those sorts of things, that’s kind of how we’ve encoded all of this, where if somebody told me, hey, Mike, you put in a potential remote code execution attack or arbitrary code, whatever it is, like you put a SQL injection attack or some other, you’re not handling this off thing correctly. If you told me, yeah, that’s the thing. And you told me what I might need to look at. yeah, let me get on that. Let me fix that.

If you were to tell me, hey, you’re using a library that isn’t maintained and that everybody has mostly moved over to this other library, cool. I’ll, I’ll work on that, but don’t make the burden. Hey, there’s a, this library is unmaintained. Okay. What am I, what am I supposed to do about it? I don’t know what I’m supposed to do about it. Help me with suggestions. So when it comes to inspector, those are the sorts of things that we sort of baked in is we’re not just telling you this project. Is it maintained?

We’re telling you, hey, this project isn’t maintained, but it’s used in just one test. So maybe it’s not the immediate thing that needs to be fixed versus, hey, this thing is completely unmaintained and it’s potentially vulnerable. And this is something new you’re adding. Like this isn’t something that already exists. This is just bad practice. Like you should probably not include this new thing. Or, you know, and again, providing the suggestions to the user on what to actually do about it.

And some of those things can then be, know, know, inspector has a CLI tool that you can use. And I use it myself with Claude where, Hey, I run it kind of come in and, you know, uh, fix it. And like, it works pretty well. So I think again, it’s, it’s having, um, it’s, it’s the combination of things to sort of make sure that it, as an engineer, you know, you’re not being asked to become an expert in this thing, right? Uh, it’s okay to ask an engineer.

You are a database expert, you should be reasonable at securing databases, but securing the underlying OS and yada yada, hey, maybe you don’t need to be an expert in that. And that’s where tools like Inspector I think really help is they’re the ones who are being experts. Again, kind of going back to that, the home analogy, right? If I run a house, if I have a house, I don’t need to know the inner mechanics of know, a pin tumbler lock and yada, yada and, and, and how the various cameras, you know, that that are looking at the outside of my house, how they all interoperate. No, I just need to know, are they working if something kind of, you know, the battery died on this, I know how to change your battery, let me kind of focus on that. But if they were kind of come in and say, No, no, you need to understand the innards of the networking and you need to understand audio visual processing, I’d be like, No, just not gonna work.

So again, make sure that developers can focus just on what their experts in, and what their primary responsibility is, which is usually to the user. And yes, security is a responsibility there, but they’re not going to be generic security experts. And so what can we do to help them hold their hand and tell them what needs to be done in a way that they can kind of say, yeah, you’re asking me to do two or three small little things. Awesome. By the way, we here at Kusari have made Inspector free for open source, but not just open source, specifically for CNCF and open SSF. You have full sort of unfettered access, no rate limits, no quotas. And love to see folks sign up. The website is kusari.cloud. And yeah, yeah, I want to see folks using it.

CRob (17:45)

It’s really interesting and I love the focus on again, because you’re all you grew up through this. are in software engineer. So I love the focus on trying to how to relieve that burden from these, this army of volunteers. So let’s do something else we do often in cybersecurity. Let’s get our crystal ball out and, you know, thinking ahead from your perspective, what do you think, you know, good security tooling for open source looks like in three to five years?

Mike Lieberman (18:16)

I think the biggest thing in security tooling is better user experience. think that to me is one of the biggest challenges. And right today, and I think that’s where a lot of folks are focusing their efforts, it’s, you we need to some extent, you know, and I know, like, the first thing that came to mind, is Kubernetes, but for security, right? And I recognize that Kubernetes, depending on who you talk to, you know, YAML files,

But no, it really did democratize and make simpler the orchestrating complex container workloads, right? And I think when it comes to security, user experience is often kind of a secondary concern compared to just the, did I prevent the security, you the issue, but that’s kind of, as our world continues to get more complex and complicated and things are scaling up and we’re having AI and all these different things. The need for security continues to increase more and more every day. But with that said, if the answer is using these security tools requires, you know, tons of certifications and whatnot for just to use the security tool, right? Not to become an expert, but just to use the security tool, if you need to be an expert in all these different things, it becomes super difficult, nobody’s gonna do it. So I think we’re gonna start to see to some extent, more tools like Inspector, but also in addition to that, more tools like, and I know we’re working on this in OpenSSF, tools that make adopting of Salsa trivial for the average project. Tools that help just sort of generally with security build out that UX, make it simpler for the average engineer to do that. Similar to how we saw stuff

in that space with DevOps, right? Where you had developers and operations, those worlds kind of became more combined. And what happened was you had tools like your Terraforms or, you know, Open Tofu and Ansible and all of these great things that kind of came out of that space to kind of make it easier for both folks who are focused in operations to get a little closer to developers and then developers to actually also help out with some of the operations, infrastructure, engineering, those sorts of things. And I think we’re gonna start to see more of that as time kind of goes on where those like, I’m gonna call like security primitives are more encoded in the tools we have. So I think we’re gonna start to see a lot of tools out there become secure by design and have a lot of the security features baked in. And then also the security tools that we have just generally become a little bit simpler and where areas where they can’t be super simple, we’re gonna see tools more tools like Inspector that kind of come in and operate similar to how you might imagine the security expert to kind of come in and put the pieces together, which again, doesn’t eliminate the security engineer. I just want to be clear, like security engineers are very much still needed. The challenge is the security engineer is now being tasked. Whereas before you had to be an expert in a small set of domains. Now you’re being asked to be an expert across everything and they need to understand that they’re going to be the ones who are like taking these new security tools and given that better UX are going to be able to scale that across, you know, 10,000 projects, you know, a hundred different AI agents, all of this, like, you know, a million containers, all of those things. So I think we’re going to start seeing a lot more of the security tools working better to scale up what we’re doing.

CRob (22:04)

That is an amazing vision. look forward to observing that over the years. Hopefully your vision becomes a reality. Yeah, thank And as we’re winding down, do you have any closing thoughts or any call to action for the audience?

Mike Lieberman (22:19)

Yeah, I think the, so there’s two big ones. One is, hey, if you’re a maintainer and engineer, right? I know you care about security because even when I was not a security engineer, I cared about security. So what I want to hear from maintainers is how can the open source world help, right? How can we help you not get clobbered by a million?

letters from lawyers and other people demanding security features in your stuff. How can we, as an open source community, help out, open source security community, help out? How can we make the tools easier? How can we make sure that those tools fit your needs? And that includes whether it’s inspector or, you know, other things, hey. And on that note as well, you know, CNCF and OpenSSF projects can use inspector..

And the other call to action, I know I say this a lot, large organizations that are using open source, it is your responsibility to provide the incentives to make sure that open source is more secure. Like we can all demand, hey, we need better open source security tooling. We need this, that, and the other thing. But if nobody’s paying for it, if at the end of the day, you know, a random engineer who’s making that open source security tool, if they can’t pay the bills, they’re not going to do that. If they are getting clobbered with a million different feature requests, it’s just not going to work. So we need to make sure. And I know that there’s things like the sovereign tech fund want to see more of that. But just sort of generally, I think it needs to come from these multi billion, multi trillion dollar companies coming in and saying, hey, we are willing to foot a good deal of this bill right in order to make the world more secure for everybody.

CRob (24:17)

Those are some wise words and also I think a wonderful vision we all can work towards together. Mike Lieberman from Kusari, thank you my friend. I loved having you on. And with that, we’re gonna call this a wrap. I want everyone to stay cyber safe and sound and have a great day.

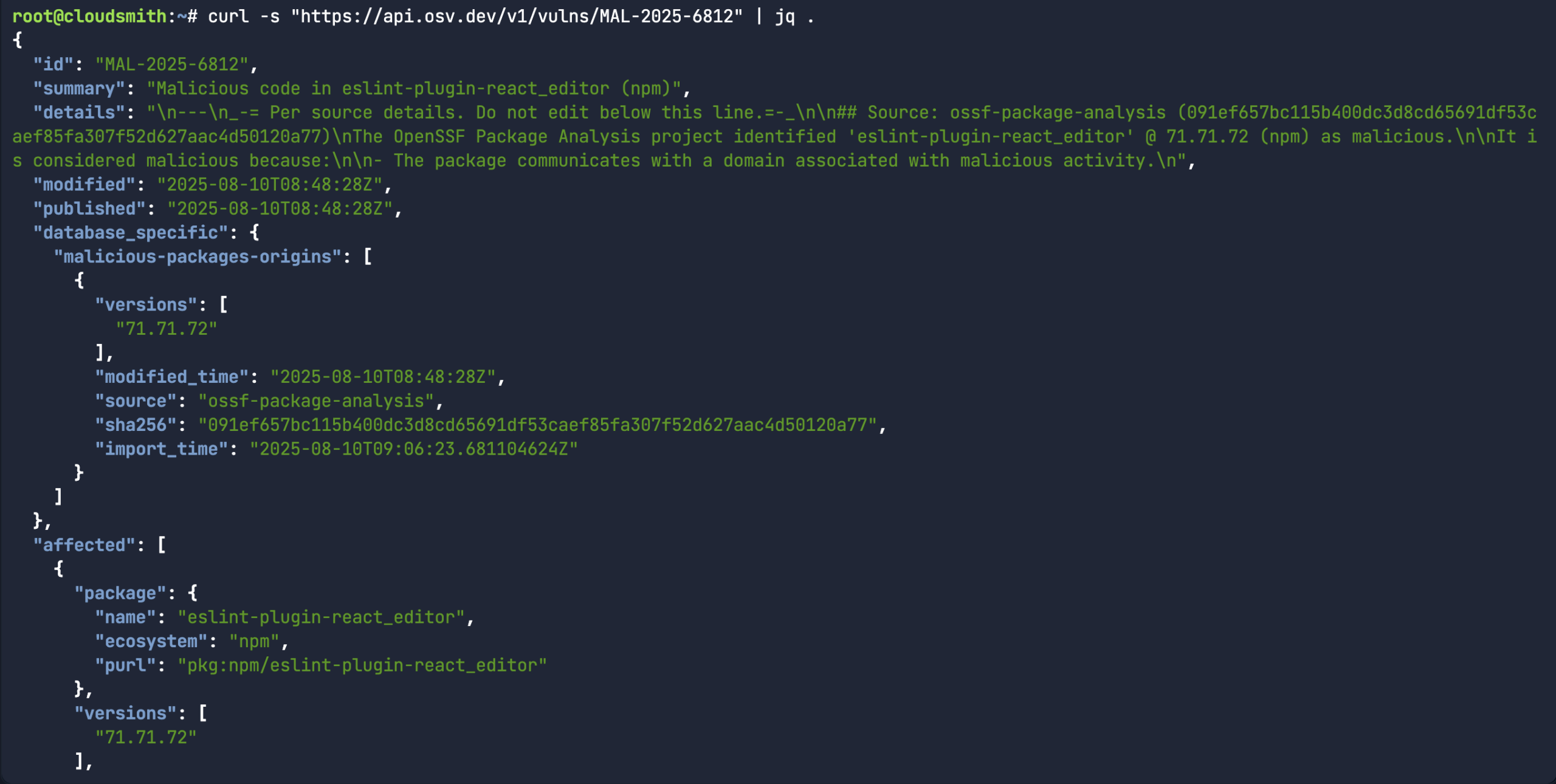

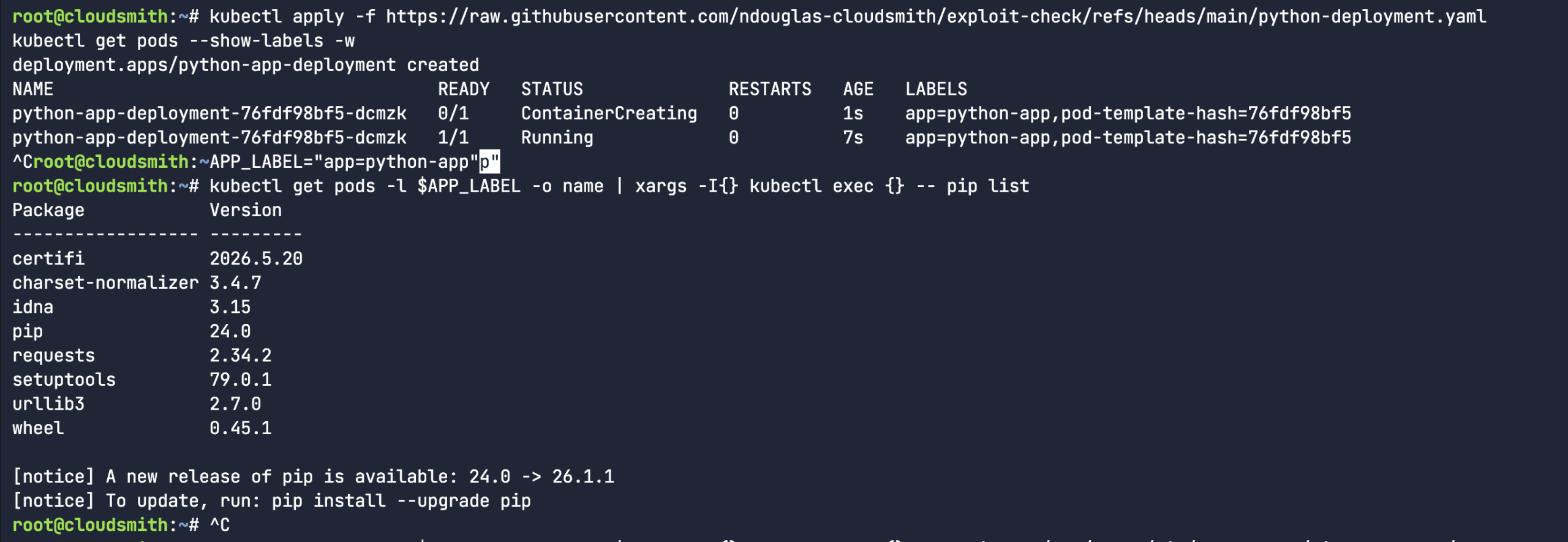

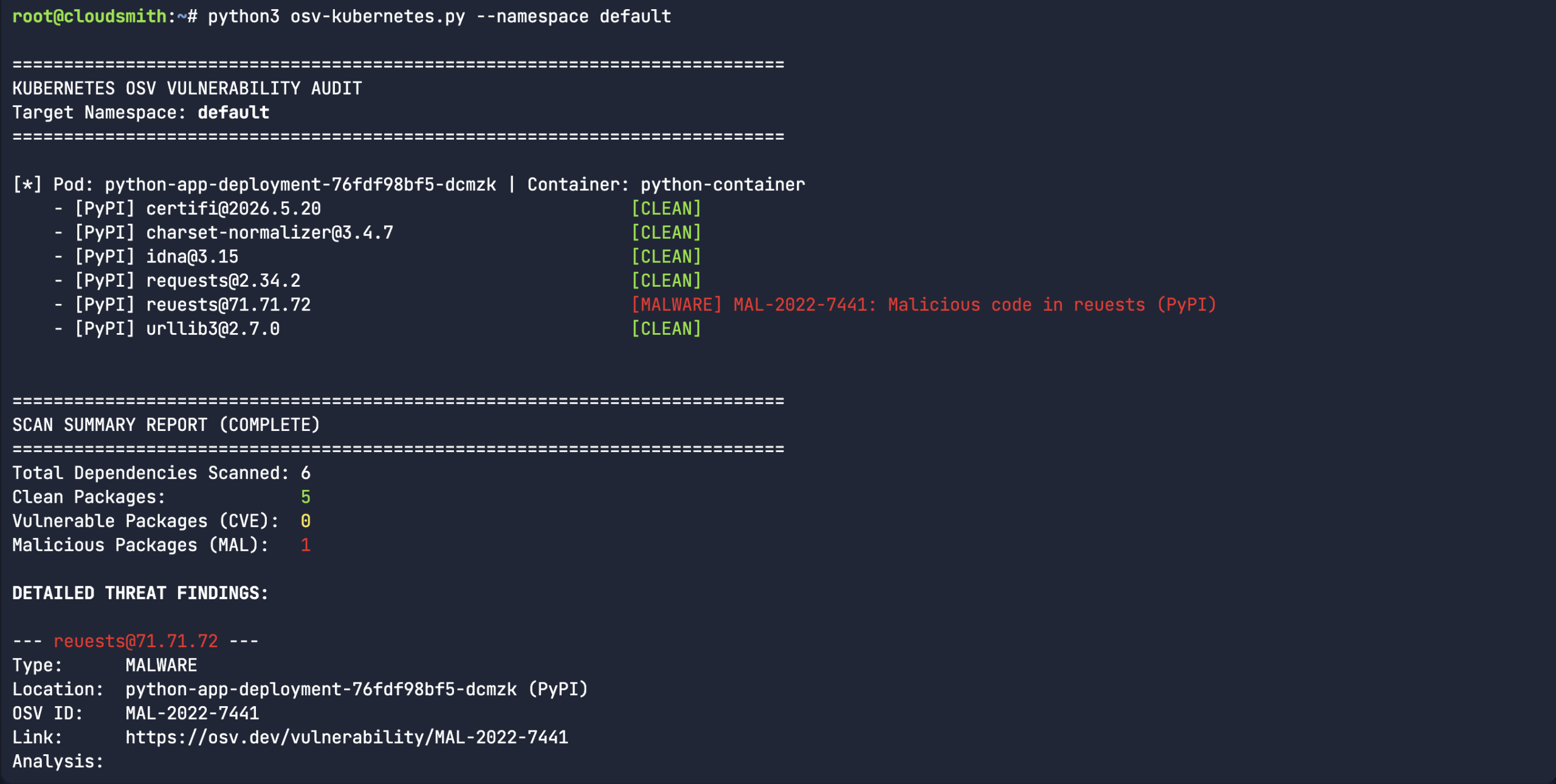

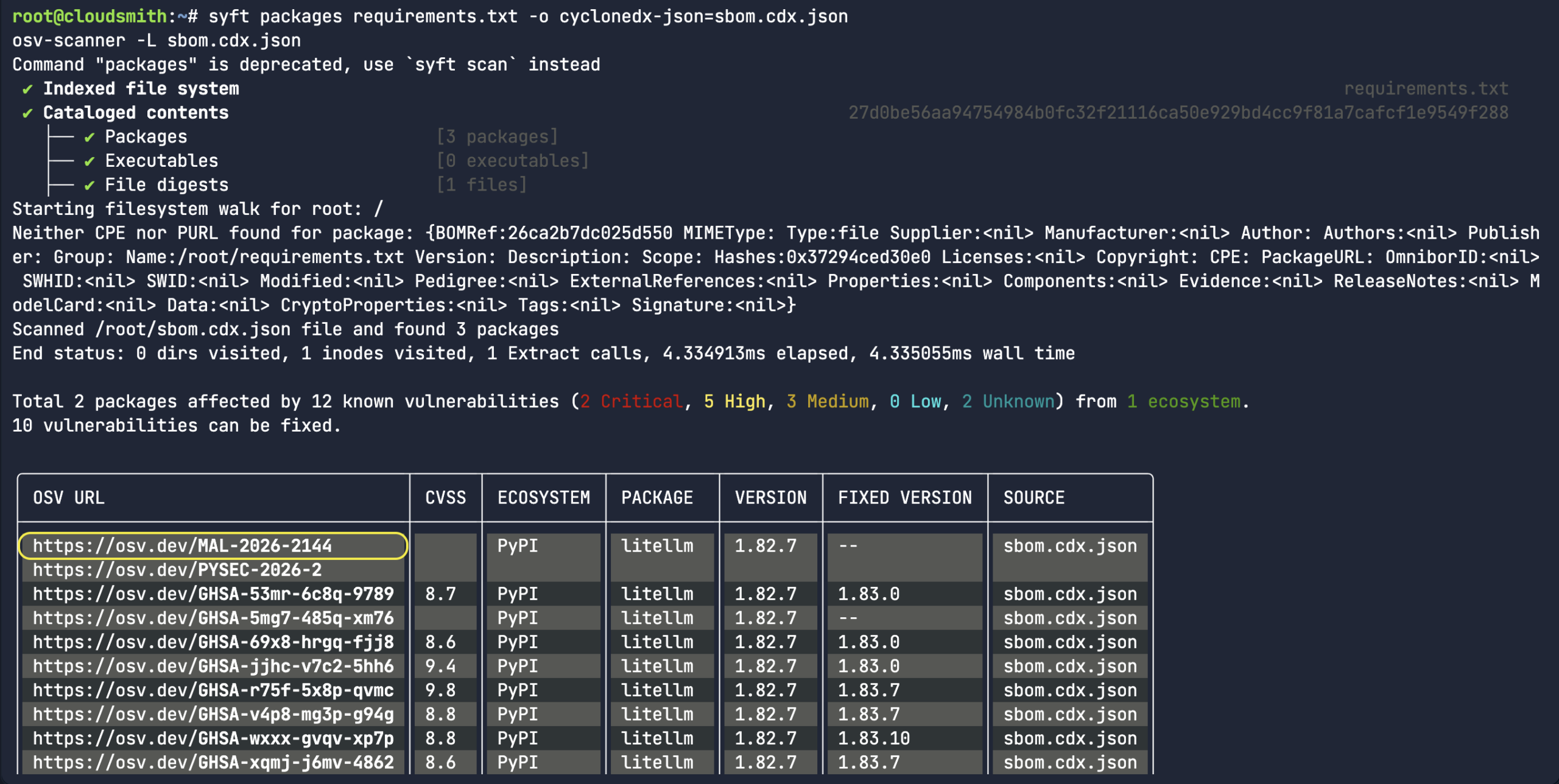

While the above command does return a bunch of information about a specific malicious package record, it would assume you already knew what the malicious package ID was in the first place. A more common use-case for the API is to look for a specific package name/version and the associated open source upstream source (ie:

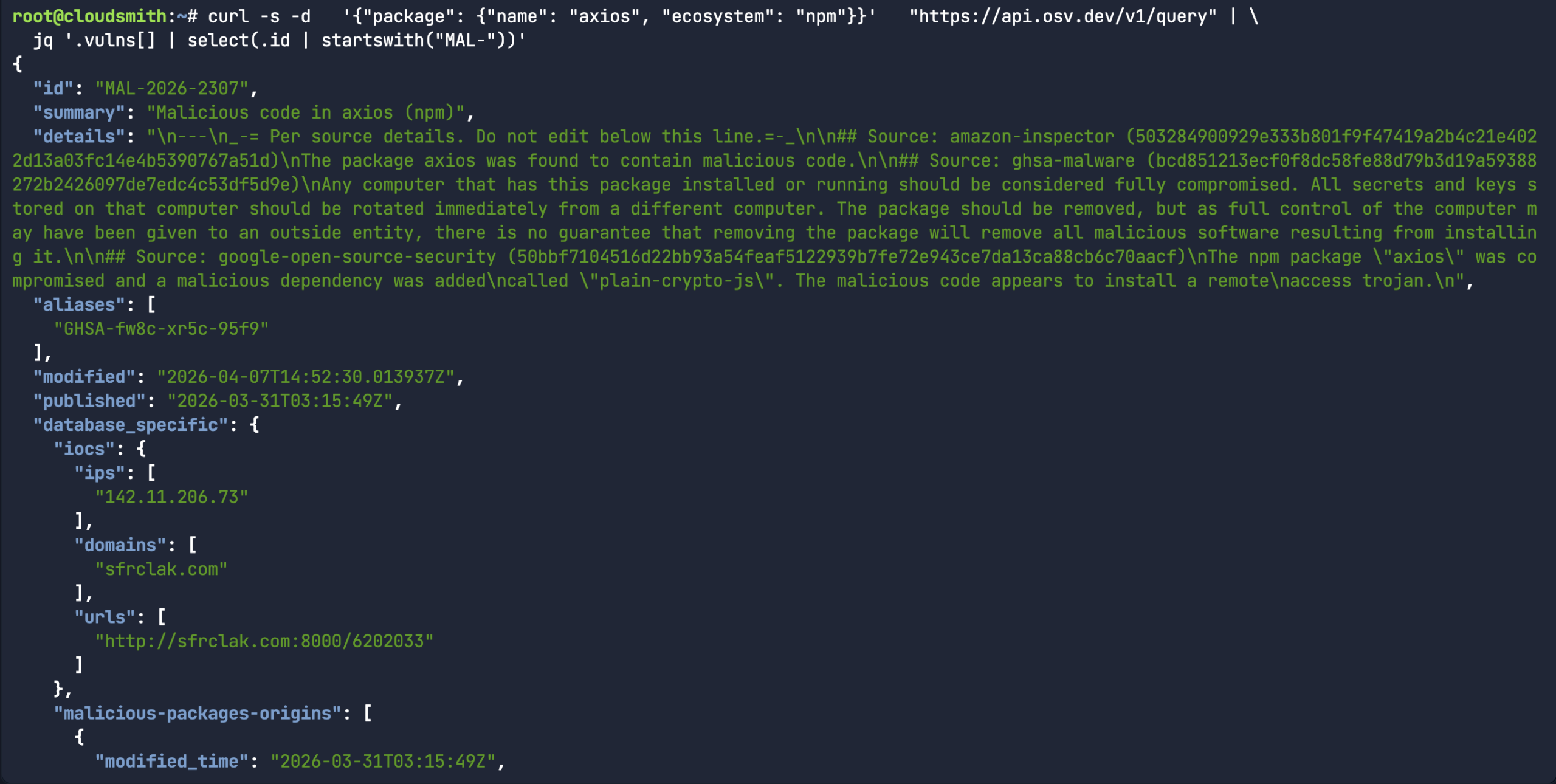

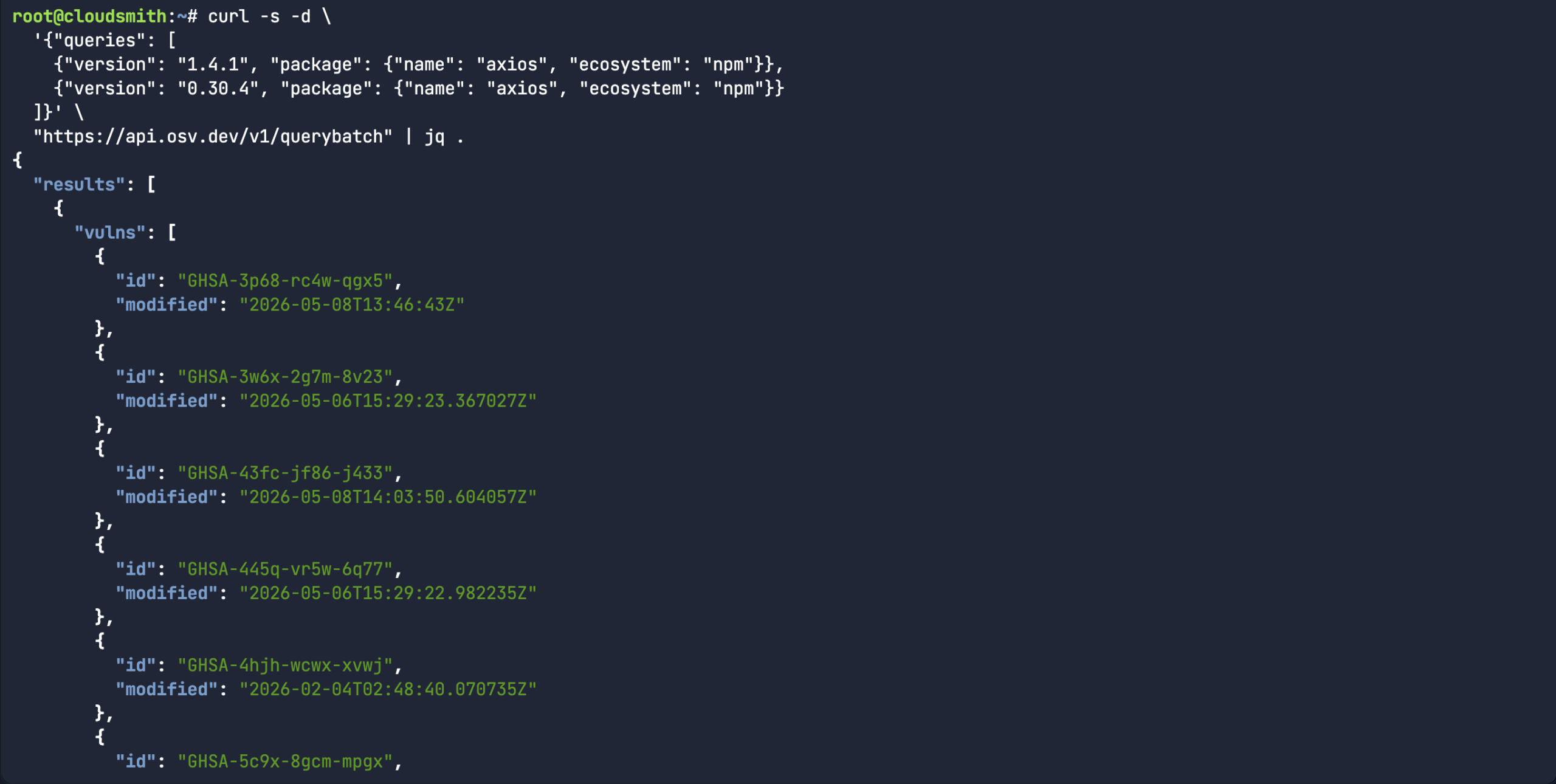

While the above command does return a bunch of information about a specific malicious package record, it would assume you already knew what the malicious package ID was in the first place. A more common use-case for the API is to look for a specific package name/version and the associated open source upstream source (ie:  Or in the case of the

Or in the case of the

So, I proceeded to create a fake python library (rather than downloading an actual malicious software package). I mean, the package name and version were real, but I fabricated the entire content of the package. It’s a totally dummy package – as you can see from the below echo commands. Let’s see if our custom

So, I proceeded to create a fake python library (rather than downloading an actual malicious software package). I mean, the package name and version were real, but I fabricated the entire content of the package. It’s a totally dummy package – as you can see from the below echo commands. Let’s see if our custom  So, we created a fake typosquatted Python package. “

So, we created a fake typosquatted Python package. “

Nigel Douglas is the Head of Developer Relations at Cloudsmith. He champions Cloudsmith’s developer ecosystem by creating compelling educational content, engaging with developer communities, and promoting software supply chain security best practices. Nigel helps build and shape the DevOps community through events, tutorials, and innovative programs.

Nigel Douglas is the Head of Developer Relations at Cloudsmith. He champions Cloudsmith’s developer ecosystem by creating compelling educational content, engaging with developer communities, and promoting software supply chain security best practices. Nigel helps build and shape the DevOps community through events, tutorials, and innovative programs.

Tracy Ragan is the Founder and Chief Executive Officer of DeployHub and a recognized authority in secure software delivery and software supply chain defense. She has served on the Governing Boards of the

Tracy Ragan is the Founder and Chief Executive Officer of DeployHub and a recognized authority in secure software delivery and software supply chain defense. She has served on the Governing Boards of the

Ben Cotton is the open source community lead at Kusari, where he contributes to GUAC and leads the OSPS Baseline SIG. He has over a decade of leadership experience in Fedora and other open source communities. His career has taken him through the public and private sector in roles that include desktop support, high-performance computing administration, marketing, and program management. Ben is the author of

Ben Cotton is the open source community lead at Kusari, where he contributes to GUAC and leads the OSPS Baseline SIG. He has over a decade of leadership experience in Fedora and other open source communities. His career has taken him through the public and private sector in roles that include desktop support, high-performance computing administration, marketing, and program management. Ben is the author of  Dejan Bosanac is a software engineer at Red Hat with an interest in open source and integrating systems. Over the years he’s been involved in various open source communities tackling problems like: Software supply chain security, IoT cloud platforms and Edge computing and Enterprise messaging.

Dejan Bosanac is a software engineer at Red Hat with an interest in open source and integrating systems. Over the years he’s been involved in various open source communities tackling problems like: Software supply chain security, IoT cloud platforms and Edge computing and Enterprise messaging.

Sarah Evans

Sarah Evans Andrey Shorov

Andrey Shorov

For nearly 20 years, Daniel has worked as a software engineer in the Defense and Aerospace industry. His experience ranges from embedded device drivers to large logistics and information systems. In recent years, he has focused on helping legacy programs adopt modern DevOps practices. Daniel works with the open source community as part of Lockheed Martin’s Open Source Program Office.

For nearly 20 years, Daniel has worked as a software engineer in the Defense and Aerospace industry. His experience ranges from embedded device drivers to large logistics and information systems. In recent years, he has focused on helping legacy programs adopt modern DevOps practices. Daniel works with the open source community as part of Lockheed Martin’s Open Source Program Office.