How Red Hat and OpenSSF are translating regulatory mandates into scalable open source community practices

Challenge

The European Union Cyber Resilience Act (CRA) introduces legally binding cybersecurity requirements for products with digital elements (including software) placed on the EU market. While designed to bolster digital safety, these requirements relied on standards historically shaped by proprietary software assumptions.

For Red Hat, whose products rely on thousands of upstream open source components, the risk was clear. If CRA standards failed to reflect the reality of how open source is built, the resulting compliance hurdles could increase cost and legal uncertainty for the enterprise while placing an unsustainable administrative burden on voluntary community maintainers.

As Red Hat Security Communities Lead Roman Zhukov, along with fellow Red Hatters from Product Security and Public Policy (Jaroslav Reznik, Pavel Hruza, and James Lovegrove), shared insights working on the CRA standards:

| “Working on traditional industry standardization ‘behind closed doors’ started as a big challenge for us, upstream-minded people, who used to openly share and collaborate on all the work that we do. But that was important. Because if those standards didn’t reflect how open source actually works, there would be a real risk of imposing corporate-level liability on the community, because of persistent compliance pressure by enterprise adopters.” |

Solution

As a Premier Member of the OpenSSF, Red Hat transitioned from collaboration to leadership, engaging with the European Commission to advocate for a clear understanding of open source development methods and helping shape CRA standards, policy, and implementation guidance.

Through OpenSSF and direct participation in European standards bodies, Red Hat has helped advance open source development practices into CRA standards and technical guidelines, including:

- Hardened development lifecycles: Advancing expectations that respect community workflows

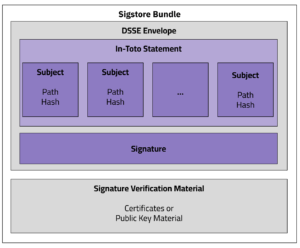

- SBOM and Vulnerability handling: Streamlining how data is shared across the supply chain

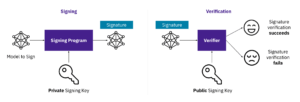

- Supply chain integrity: Promoting frameworks that can verify security without slowing innovation

Red Hat also championed OpenSSF frameworks as essential reference points for industry preparing for CRA compliance, including:

Together, these efforts provided regulators and manufacturers with practical, community-vetted guidance for implementing CRA requirements. This helps shift the responsibility back to manufacturers and stewards through consistent data discovery rather than placing the burden of evidence upon voluntary communities.

Red Hat’s Portfolio Security Architect Emily Fox expanded on her thoughts regarding stewardship and shared responsibility under the CRA:

| “True stewardship shields open source creators from legislative burden. We don’t ask maintainers to become commercial suppliers; we step in to absorb the complexity, turning commercial compliance mandates into engagement opportunities that drive real security for everyone.” |

Results

Red Hat’s leadership within OpenSSF helped deliver ecosystem-wide impact:

- Standardization Alignment: State-of-the-art secure development practices were incorporated into EU CRA technical guidelines

- Framework Recognition: The OpenSSF Security Baseline and SLSA are now recognized as reference frameworks for development

- Reduced Friction: Lowered compliance barriers across thousands of upstream open source components

- Increased Confidence: Bolstered regulator and enterprise trust in open source maturity

Why This Matters

Open source software underpins 90% of modern technology stacks. By leading through OpenSSF, Red Hat helped the CRA reinforce shared responsibility and practical security improvements rather than shifting administrative weight onto open source maintainers.

Learn More

About

Roman Zhukov is a cybersecurity expert, engineer, and leader with over 17 years of hands-on experience securing complex systems and software products at scale. At Red Hat, Roman leads open source security strategy, upstream collaboration, and cross-industry initiatives focused on building trusted ecosystems. He is an active contributor to open source security and co-chair of the OpenSSF Global Cyber Policy WG.

Roman Zhukov is a cybersecurity expert, engineer, and leader with over 17 years of hands-on experience securing complex systems and software products at scale. At Red Hat, Roman leads open source security strategy, upstream collaboration, and cross-industry initiatives focused on building trusted ecosystems. He is an active contributor to open source security and co-chair of the OpenSSF Global Cyber Policy WG.

Emily Fox is a visionary security leader whose sustained contributions have profoundly shaped both internal company strategy and the broader open source industry. With over 15 years of experience, she has consistently operated at the intersection of deep technical expertise and strategic leadership, driving critical initiatives in cloud native security, software supply chain integrity, post-quantum cryptography, and zero trust architecture at top-tier organizations including Red Hat, Apple, and the National Security Agency. Her career is marked by a rare ability to not only architect complex, cutting-edge solutions but also to lead global communities, influence industry standards, and mentor the next generation of technologists.

Emily Fox is a visionary security leader whose sustained contributions have profoundly shaped both internal company strategy and the broader open source industry. With over 15 years of experience, she has consistently operated at the intersection of deep technical expertise and strategic leadership, driving critical initiatives in cloud native security, software supply chain integrity, post-quantum cryptography, and zero trust architecture at top-tier organizations including Red Hat, Apple, and the National Security Agency. Her career is marked by a rare ability to not only architect complex, cutting-edge solutions but also to lead global communities, influence industry standards, and mentor the next generation of technologists.

Mihai Maruseac is a member of the Google Open Source Security Team (GOSST), working on Supply Chain Security for ML. He is a co-lead on a Secure AI Framework (SAIF) workstream from Google. Under OpenSSF, Mihai chairs the AI/ML working group and the model signing project. Mihai is also a GUAC maintainer. Before joining GOSST, Mihai created the TensorFlow Security team and prior to Google, he worked on adding Differential Privacy to Machine Learning algorithms. Mihai has a PhD in Differential Privacy from UMass Boston.

Mihai Maruseac is a member of the Google Open Source Security Team (GOSST), working on Supply Chain Security for ML. He is a co-lead on a Secure AI Framework (SAIF) workstream from Google. Under OpenSSF, Mihai chairs the AI/ML working group and the model signing project. Mihai is also a GUAC maintainer. Before joining GOSST, Mihai created the TensorFlow Security team and prior to Google, he worked on adding Differential Privacy to Machine Learning algorithms. Mihai has a PhD in Differential Privacy from UMass Boston.

Daniel Major is a Distinguished Security Architect at NVIDIA, where he provides security leadership in areas such as code signing, device PKI, ML deployments and mobile operating systems. Previously, as Principal Security Architect at BlackBerry, he played a key role in leading the mobile phone division’s transition from BlackBerry 10 OS to Android. When not working, Daniel can be found planning his next travel adventure.

Daniel Major is a Distinguished Security Architect at NVIDIA, where he provides security leadership in areas such as code signing, device PKI, ML deployments and mobile operating systems. Previously, as Principal Security Architect at BlackBerry, he played a key role in leading the mobile phone division’s transition from BlackBerry 10 OS to Android. When not working, Daniel can be found planning his next travel adventure.